Cypress Atlanta Launches Plain English Test Automation Beta

Atlanta-based Cypress has moved its natural-language feature into beta, giving teams a new way to approach Cypress test automation in plain English instead of building every step through JavaScript commands. Rather than requiring someone to manually script each interaction, the system translates described user behavior into executable Cypress code.

That shift matters because test creation is often a bottleneck between product intent and release readiness. A team member can describe a workflow, such as logging in, opening a dashboard, and confirming that a chart appears, and the platform generates the underlying testing logic from there.

For Georgia startups and product teams working with limited engineering bandwidth, this could widen participation in quality assurance. It does not remove the need for technical oversight, but it may reduce the amount of specialized scripting knowledge required to build useful test coverage.

Natural Language Processing Meets DOM Element Detection

The beta is built around a natural-language layer that interprets written instructions and turns them into runnable test code. Cypress tech is also positioning the system to identify relevant DOM elements automatically, which could help teams avoid some of the brittle selector choices that often make end-to-end tests harder to maintain.

That matters in a very practical way. Automated tests tend to become unreliable when selectors are too tied to presentation details or when they are written inconsistently across a growing application. If AI-assisted generation helps teams produce cleaner selectors from the start, it may reduce some of the false failures that slow release cycles and drain engineering time.

This does not mean flaky tests disappear on their own. UI changes, unstable environments, and weak test design can still create failures. Even so, the feature points toward a workflow where teams spend less time writing repetitive syntax and more time defining what a test actually needs to validate.

Model Context Protocol Provides Real-Time Application Access

Cypress is also tying the beta to Model Context Protocol, or MCP, which is meant to give the AI better visibility into what the application is doing in real time. That added context could make test generation more grounded than generic AI coding tools that work from prompts alone.

In practice, that means the system is not only guessing from a written request. It can work from the actual browser state, page structure, and application behavior in a way that may improve how it maps natural-language instructions to real test steps.

For teams handling dynamic interfaces, role-based views, or frequently changing product screens, that context layer could be one of the more meaningful parts of the release. It suggests Cypress is not simply adding AI for convenience; it is trying to make generated tests more aware of the application they are supposed to validate.

Why This Could Matter for Atlanta Tech and Georgia Startups?

This is where the local angle becomes more useful than a labor-shortage claim. Many Atlanta tech teams and Georgia startups operate with lean engineering resources, which means QA work is often distributed across developers, product staff, and release managers instead of being owned by a large dedicated testing department.

A plain-English workflow for Cypress test automation could help in several ways:

- Product teams can describe expected behavior more directly

- Junior contributors can participate in test creation sooner

- Developers may spend less time writing repetitive test scaffolding

- Growing teams can expand coverage without scaling QA headcount at the same pace

That does not make QA expertise unnecessary. Instead, it changes where expertise is applied. Senior engineers and test leads can focus more on strategy, review, and reliability standards while more of the initial test-writing work becomes accessible to the wider team.

For the Georgia tech scene, that is the stronger story: not that AI suddenly solves hiring constraints, but that it could help companies get more testing output from the teams they already have. That is also why the shift stands out for Peach State Tech as Atlanta software teams look for more practical ways to scale QA.

QA Wolf and Microsoft Playwright Present Direct Competition

Cypress is entering a competitive testing landscape, with QA Wolf emphasizing managed outcomes and Playwright continuing to win developer attention through performance and cross-browser flexibility. In that environment, Cypress needs a differentiator that feels meaningful rather than cosmetic.

Its strongest distinction here may be that the generated code remains visible and editable. Teams are not locked into a black-box output that lives inside a vendor-managed service. They can inspect the test logic, modify it, review it in version control, and extend it as application needs change.

That approach is likely to appeal to professional software teams that want AI assistance without giving up control of their test suites. It also fits the governance expectations that often emerge as startups mature into more structured engineering organizations.

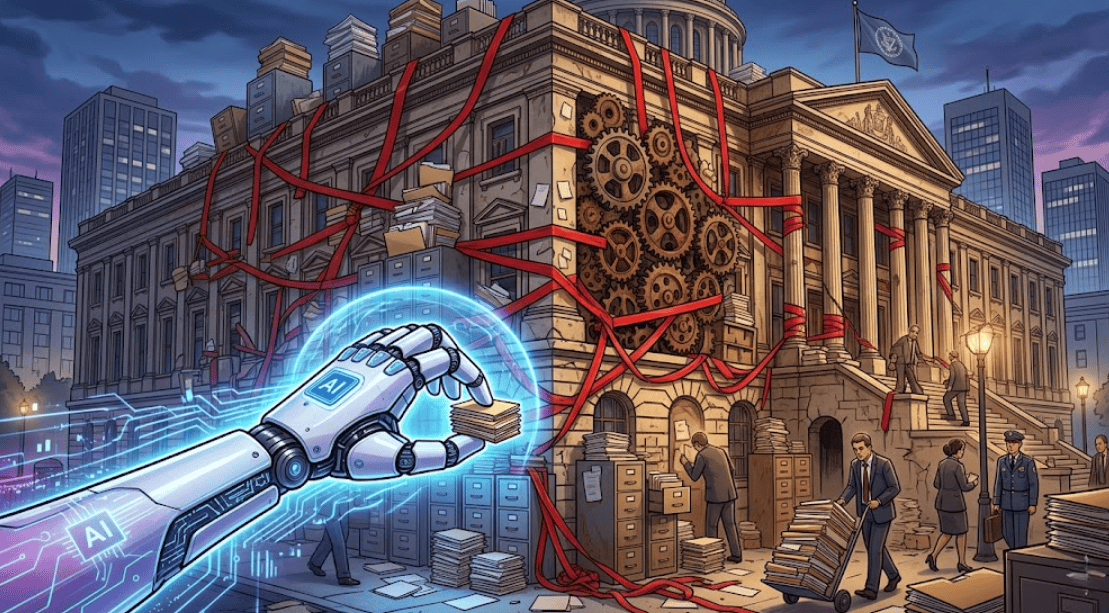

Addressing Technical Debt in Legacy Test Suites

One of the more practical use cases is test-suite cleanup. Many teams accumulate brittle or inconsistent tests during fast product cycles, especially when shipping pressure outruns process discipline. Over time, those suites become harder to maintain, harder to trust, and more expensive to keep updating.

A natural-language approach to Cypress test automation could make refactoring easier. Instead of unraveling a long chain of old selectors and unclear intent, teams may be able to restate the desired behavior in simpler language and generate a cleaner starting point for a modernized test.

For Atlanta business software teams and Georgia startups alike, that could make AI-assisted testing useful beyond greenfield development. The value may be just as strong in helping teams rebuild what they already have.

Beta Participation and Production Readiness Timeline

Cypress has indicated that General Availability is expected later this year, while beta access is currently open to existing customers. The long-term impact will depend on how well the feature performs in real testing environments, especially in CI/CD pipelines where speed, reliability, and cost all matter.

That is an important point for teams evaluating the release. AI-generated testing is most compelling when it saves time without adding too much friction in execution, review, or computational expense. If the feature improves authoring but becomes expensive or inconsistent at scale, adoption could slow.

For now, the beta gives Georgia innovation teams a practical reason to watch Cypress closely. If the product delivers on usability without sacrificing maintainability, it could become a meaningful tool for companies trying to strengthen software quality without expanding testing overhead at the same rate.

Keep reading Peach State Tech for more on Cypress test automation, Atlanta tech shifts, and the tools reshaping how Georgia teams build and ship software.