What AI Image Generator Bias Looks Like

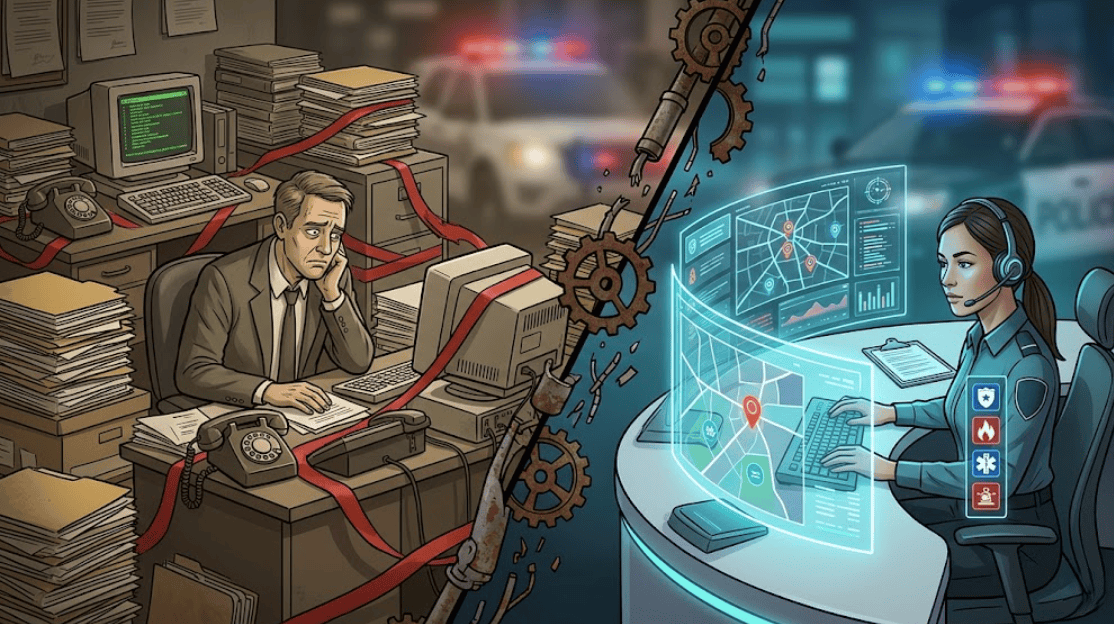

Ask an artificial intelligence tool to create an image of a “CEO at a board meeting,” and you will often get a middle-aged white man in a suit. Ask for a “nurse,” and the result may skew female. These patterns show how image generation models and AI image generator bias can reinforce long-standing gender stereotypes instead of reflecting present-day reality.

This is not random. It is a byproduct of how an AI model learns from massive training datasets collected across the internet. Because those datasets reflect historical imbalances and blind spots in data collection, they can reproduce both racial bias and gender bias, leading to the perpetuation of stereotypes in the images they generate.

For brands, that means default outputs may not match the people they actually want to reach.

Common Examples of Biased AI Outputs

- CEOs shown primarily as white men

- Nurses are shown primarily as women

- Leadership scenes lacking visible diversity and inclusion

- Inconsistent rendering of skin color across generated images, causing distortions in representation

- Repetitive workplace visuals that do not reflect the real world

Why These Patterns Happen

- Generative AI models learn from historical online imagery

- Biased training data influences generative AI outputs

- Visual shortcuts reinforce old assumptions and implicit bias

- Algorithms scale existing patterns unless people intervene

Why Bias in AI Images Is a Problem for Brands?

For marketing teams, AI image generator bias is not just a technical concern. It is a brand risk that can weaken authenticity, create audience disconnect, and make campaigns feel less credible. When visuals rely on biased defaults, they can fail to reflect the minority groups and different demographic groups a brand is trying to reach.

If agencies use AI without review, they risk publishing imagery that feels generic, exclusionary, or out of touch. That is why teams are paying more attention to how AI image generation shapes audience perception.

How Biased Visuals Affect Marketing Performance

- They weaken authenticity

- They create a disconnect with target audiences

- They can undermine trust in the brand

- They make campaigns feel less local and less credible

- They increase the risk of publishing stereotypical or outdated imagery

How Inclusive Prompting Improves AI Outputs

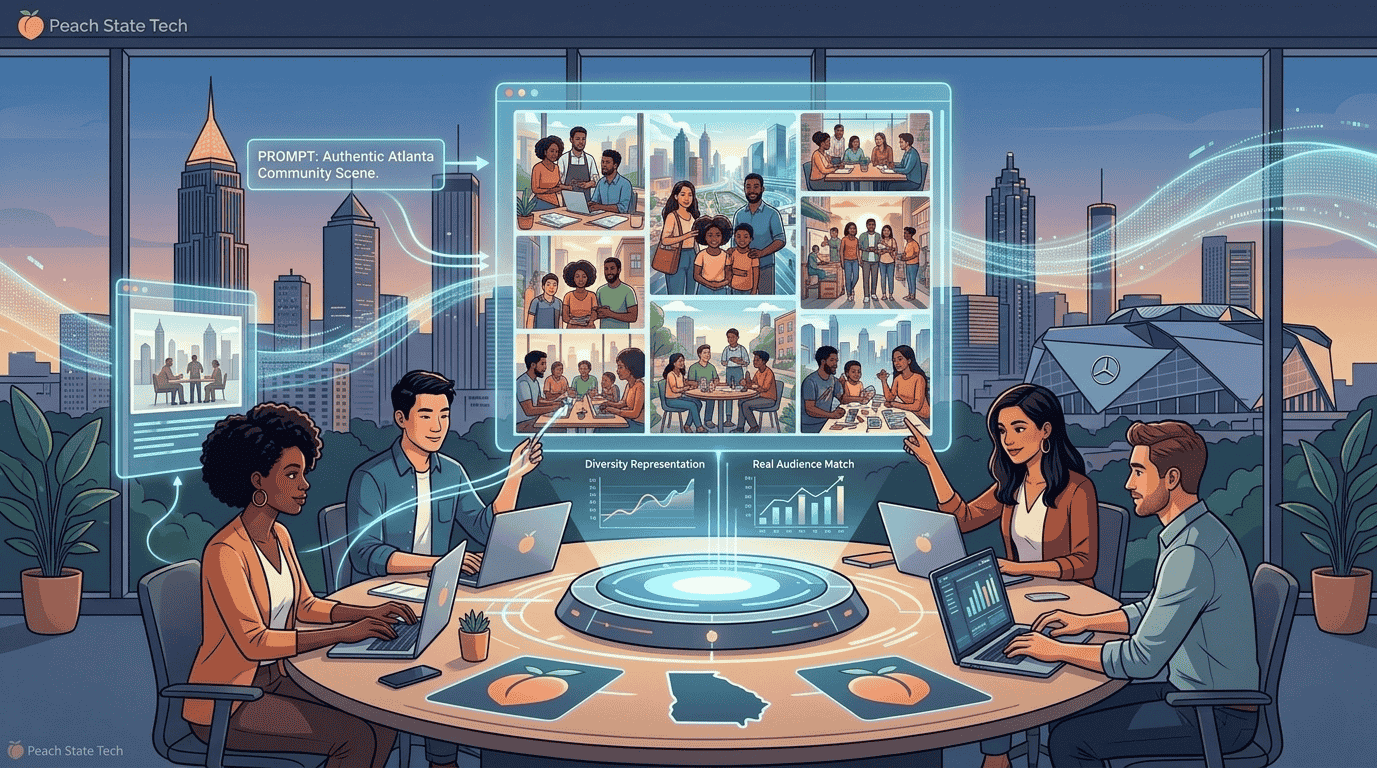

To address this, Atlanta agencies are adopting inclusive prompting as a more deliberate part of their creative process to promote fairness. This is where prompt engineering becomes essential.

Instead of using broad prompts, teams now specify details such as race, age, gender, setting, and context. Rather than asking for a “CEO in a meeting,” they may request a more specific scene that reflects the audience and environment they want to show.

This approach helps counter built-in assumptions and gives teams more control over representation. It also moves agencies away from a “prompt and post” mindset and toward more intentional mitigation strategies that improve quality before content ever goes live.

What Inclusive Prompting Looks Like in Practice

Teams can improve outputs by specifying:

- race or ethnicity

- age range

- gender mix

- professional setting

- cultural or geographic context (ensuring inclusivity)

- ability and body diversity

Example of a Better Prompt

Instead of:

Try:

- A diverse executive team, including Black and female leaders, in a modern Atlanta boardroom

That kind of prompt engineering gives creative teams more influence over how generative AI interprets the request.

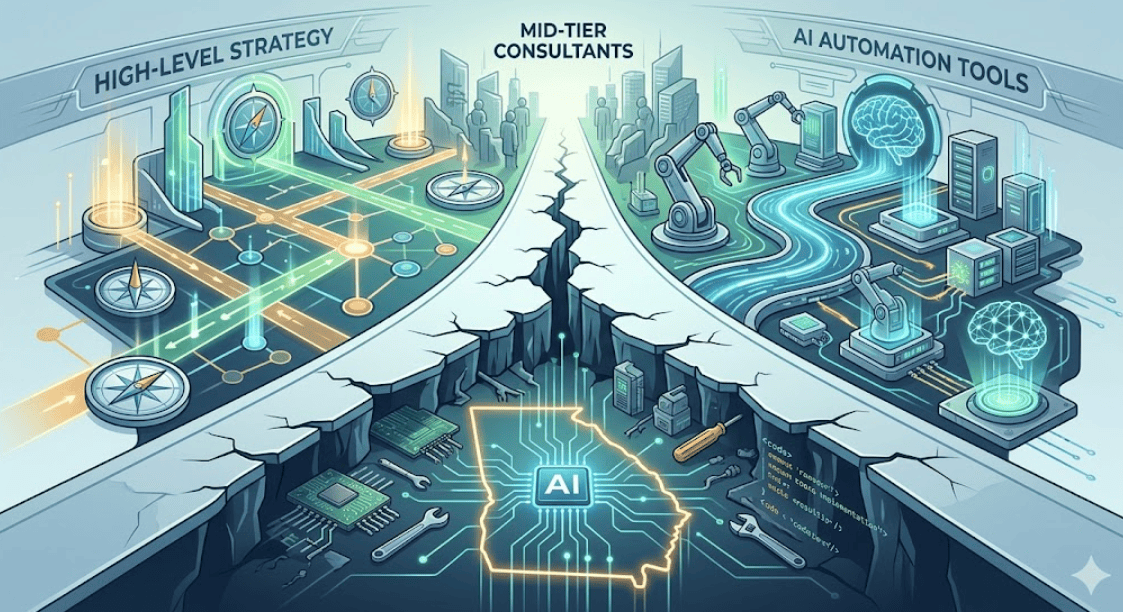

Why Agencies Use a Diversity Audit for AI Visuals

Prompting alone is not enough. That is why many firms are adding a diversity audit for AI visuals to their workflow.

Before an image is approved, teams review it for inclusive representation, realistic context, natural rendering, and audience fit. This step helps catch issues and subtle ai biases that even detailed prompts can miss.

It also serves as one of the most practical mitigation strategies available to teams working with generative tools today.

What a Diversity Audit Should Check

- inclusive representation across roles and settings

- realistic rendering of features and skin color

- cultural relevance and geographic accuracy

- whether the image reflects the intended audience

- whether stereotypes appear in subtle or obvious ways

Why This Step Matters

A diversity audit for AI visuals helps agencies slow down before publishing. Instead of accepting the first usable output, teams can assess whether the image actually feels authentic to the neighborhoods, customers, and business communities it is meant to represent.

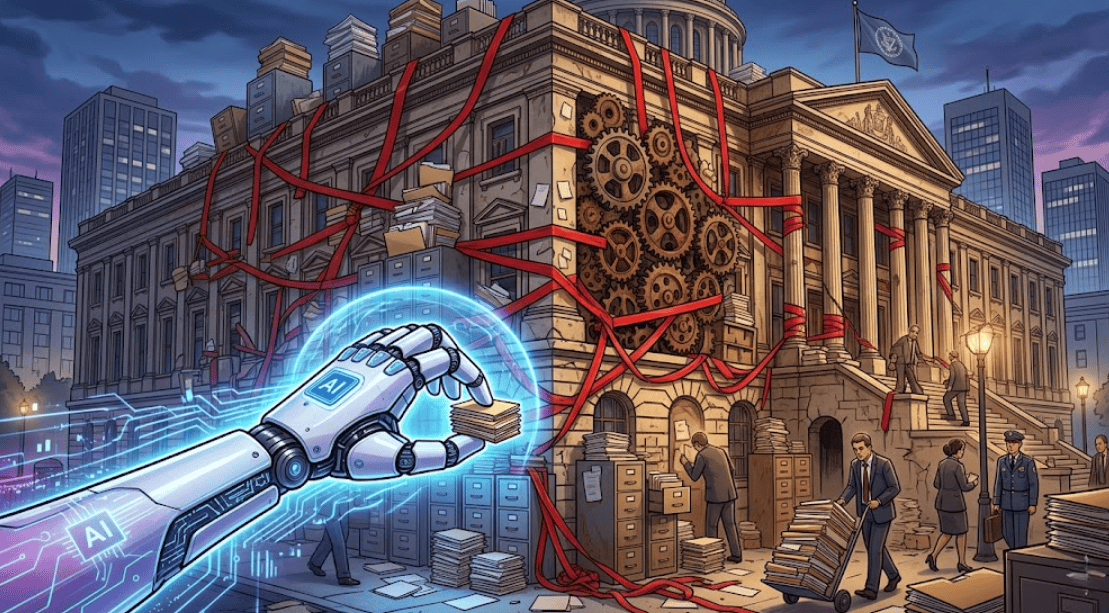

Why Human Oversight Still Matters

The use of AI can improve speed and output volume, but it still lacks judgment. That is why human oversight in AI-generated content remains the most important quality control step.

Creative professionals are no longer spending all their time building visuals from scratch. Instead, they are evaluating outputs, refining direction, and deciding which images feel credible and appropriate. This is especially important when reviewing content for subtle signs of racial bias, gender bias, or other stereotypical patterns.

The strongest creative workflows treat AI as a production tool, not a final decision-maker. Humans still provide the cultural awareness and transparency that software cannot.

What Humans Still Do Better Than AI

- recognize nuance

- spot visual stereotypes

- understand local audience expectations

- judge authenticity and tone

- make final creative decisions

The New Creative Workflow

A modern workflow often looks like this:

AI generates multiple image options

Teams refine results through inclusive prompting

Reviewers run a diversity audit for AI visuals

Humans make the final call based on brand, audience, and context

That is where human oversight in AI-generated content becomes the true differentiator.

The Future of More Representative AI Visuals

Looking ahead, many observers in the AI industry believe the next step is building more localized and culturally aware systems. As generative AI evolves, agencies will likely push for tools trained on more representative and carefully curated data.

That could help reduce the gap between automated output and audience reality. But until those systems improve, businesses still need intentional prompts, review standards, and stronger mitigation strategies to avoid repeating the same visual defaults.

The future of AI image generation will not be shaped by automation alone. It will depend on whether creative teams are willing to guide these systems with purpose.

What Better AI Tools Could Eventually Deliver

- more accurate local context

- stronger representation across communities

- fewer default stereotypes

- more realistic visual diversity

- less dependence on manual correction after generation

AI is changing the speed and scale of content production, but it is not neutral. AI image generator bias shows up when systems rely too heavily on past patterns and unchecked assumptions.

Atlanta agencies are responding with better processes: inclusive prompting, structured review, and stronger human judgment. Adding a diversity audit for AI visuals and maintaining human oversight in AI-generated content helps teams create work that feels more accurate, relevant, and inclusive.

AI can generate options. People still decide what belongs in the final campaign.

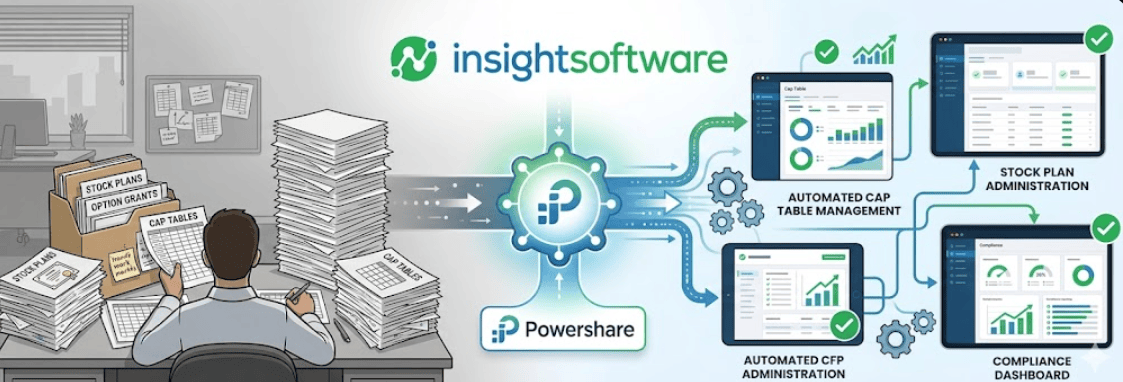

Need Help Using AI the Right Way?

AI tools can accelerate your marketing, but without the right strategy, they can miss the mark. Peach State Tech helps businesses use AI in ways that are efficient, accurate, and aligned with their audience.

Whether your team is exploring AI for design, content, or campaign production, we can help you build smarter, more inclusive workflows.