Atlanta Software Teams Need to Prepare for a New Compliance Phase

Software teams across Atlanta are entering a new phase of AI development, one shaped not only by product speed and market pressure, but also by regulation. As the EU AI Act moves through its staged implementation, companies building AI products for international markets may need to treat compliance as part of product development rather than a legal issue to address later.

That matters for Atlanta’s software, fintech, and healthtech sectors. If a product is placed on the EU market or used in the EU, the rules can apply regardless of where the company is based. For engineering teams, that means regulation may now affect system design, documentation, testing, oversight, and release processes.

For the broader Georgia tech scene, this is another sign that software development is becoming more structured and more accountable. Across the Peach State, companies aiming for enterprise growth or international reach may need to build AI systems with stronger governance from the start.

The Real EU AI Act Timeline

The most important thing to get right is the timeline. The AI Act does not hinge on a single unsupported March 2026 trigger date. Instead, implementation happens in stages, which makes it more useful to understand the broader compliance rollout than to focus on one dramatic deadline.

The law has introduced obligations tied to prohibited practices, general-purpose AI, and high-risk systems through a phased rollout. For software companies, the bigger issue is that more of the framework is taking effect over time, while enforcement expectations continue to become clearer.

That matters to the Georgia tech scene because many local companies are not building for a purely local audience. A platform developed in Atlanta can still face European compliance obligations if it reaches users, customers, or partners in EU markets.

High-Risk AI Systems Carry the Heaviest Burden

The EU AI Act uses a risk-based structure. Some uses are prohibited outright, while high-risk systems face the strictest operational requirements. The law covers higher-risk use cases in areas such as employment, education, critical infrastructure, and certain financial or public-service contexts.

For companies in Atlanta building AI for hiring, lending, health-related workflows, or other sensitive business functions, this raises the bar. Compliance can include risk management, technical documentation, data governance, record-keeping, human oversight, accuracy, robustness, and cybersecurity measures.

The burden is not just proving that a model works, but showing that it can be monitored, reviewed, and governed responsibly. That is especially relevant in the Peach State, where many growing companies are trying to move from startup-style speed to more mature operating systems that can support larger markets and more demanding customers.

Why the EU AI Act Matters to Georgia Companies

Software distribution is not limited by geography. A product built in Atlanta can still fall within the scope of EU rules if it is marketed, placed on the market, or used in Europe. That is why the EU AI Act matters even to companies whose teams, offices, and investors are all based in Georgia.

There is also a broader strategic issue. European regulation often shapes product decisions beyond Europe itself because companies prefer building to one standard rather than maintaining separate systems for different jurisdictions. The same pattern has played out before in tech, and it is now becoming more relevant to AI.

For the Georgia tech scene, this creates pressure to mature quickly. Startups and mid-market firms across the Peach State can no longer assume that compliance belongs only to legal teams or global corporations. It is becoming part of how software products are built, documented, and trusted.

Fintech and Healthtech May Feel the Pressure First

Atlanta’s fintech and healthtech sectors are especially likely to feel the impact. These are areas where AI can affect consequential decisions, which makes documentation, traceability, explainability, and oversight harder to treat as optional extras.

Three pressure points stand out in particular:

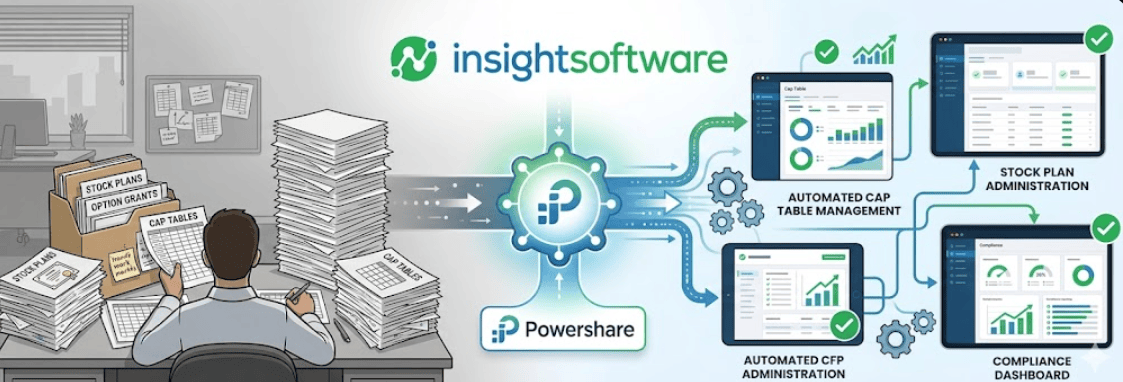

- Model transparency: Teams may need to explain how outputs are generated and what limits or risks come with those outputs.

- Data governance: Companies may need clearer records of training data sources, lawful acquisition, and data quality controls.

- Human oversight: Systems used in higher-risk contexts may need meaningful ways for people to review, intervene, or override automated outputs.

For teams relying on third-party models or external AI APIs, the challenge can extend beyond internal code. It may also involve supplier documentation and a clearer understanding of dependencies across the AI stack.

This is where the Georgia tech scene may start to split between companies that treat AI governance as foundational and those that still view it as something to address later. In the Peach State, sectors like fintech and healthtech may face this divide sooner than others.

Compliance Can Also Strengthen Market Position

Regulation is often framed as a brake on innovation, but it can also create separation in the market. Companies that build trustworthy systems earlier may be better positioned to win enterprise customers, support cross-border growth, and handle deeper procurement scrutiny.

That makes compliance more than a legal safeguard. It can become part of product positioning. In a market where buyers are increasingly cautious about AI risk, documented controls and stronger governance can help a company look more mature, more reliable, and easier to trust.

This matters for Peach State Tech because many of the companies shaping Georgia’s innovation economy are now competing in markets where credibility matters as much as product velocity. Across the Peach State, firms that can demonstrate responsible AI practices may be better equipped to stand out in crowded enterprise and investor conversations.

What Atlanta Engineering Teams Should Do Now

The practical response is to start treating AI compliance as an operating discipline. That does not mean slowing every product decision to a crawl. It means building more structure around AI systems before risk becomes harder to manage.

Teams should focus on:

- System inventory: Identify every AI model, feature, and external dependency in production or active development.

- Risk classification: Assess whether systems may fall under prohibited, high-risk, general-purpose AI, or transparency-related obligations.

- Documentation standards: Create repeatable ways to document data sources, testing methods, model limitations, monitoring, and oversight.

- Governance ownership: Assign clear responsibility across legal, engineering, product, and leadership teams.

- Workflow integration: Add compliance review points into existing development and release processes rather than treating them as last-minute checks.

These steps may sound operational, but they are increasingly strategic. For companies across the Georgia tech scene, the ability to connect product development with governance could become a meaningful advantage rather than a backend burden.

Trust Is Becoming a Product Requirement

The next phase of AI competition may not be defined only by speed. It may be shaped by which companies can show that their systems are reliable, explainable, and ready for scrutiny.

For Atlanta tech firms, the EU AI Act is a reminder that growth now depends on more than product ambition. It also depends on discipline. Teams that adapt early may be better prepared for enterprise sales, international expansion, and future regulation. Teams that delay may find that technical capability alone is no longer enough.

For more insight into how regulation, infrastructure, and innovation are reshaping the Peach State, follow Peach State Tech for continued coverage of the companies, trends, and market shifts shaping the Georgia tech scene.